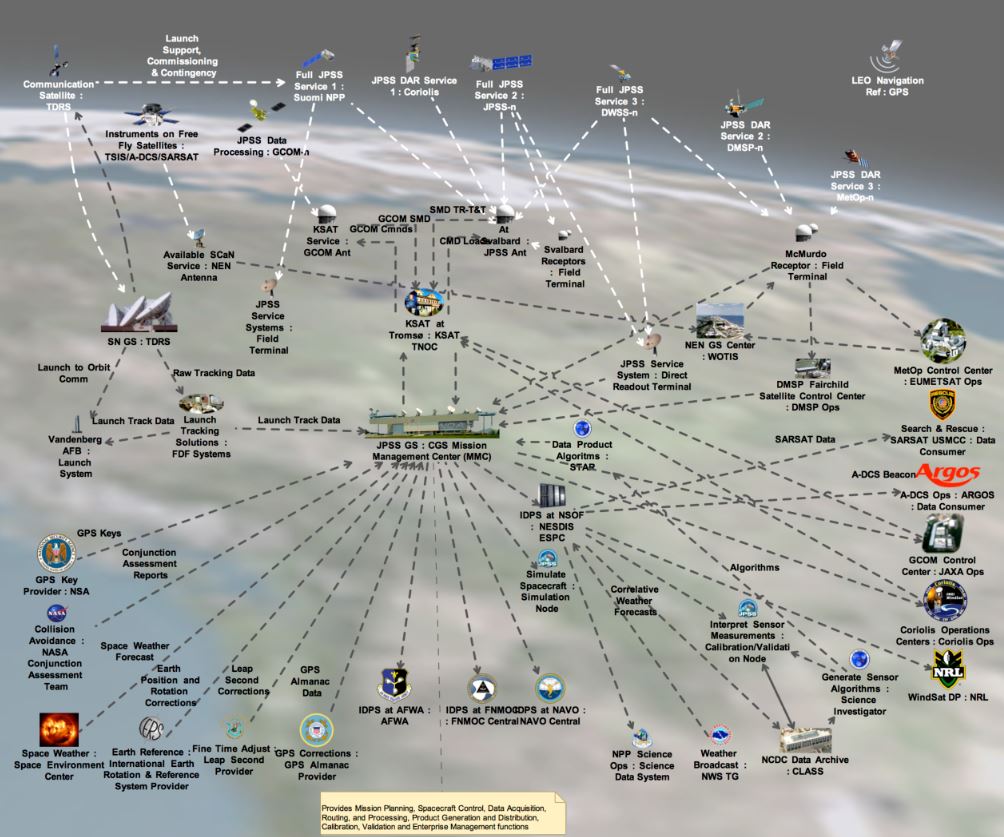

In a modern battle scenario the proliferation of Electronic Warfare devices, electronic emitters and platforms as well as technologies to influence the EM spectrum, produces vast amounts of data. Devices such as jammers, radar jammers, countermeasure dispensing systems, decoys, flare/chaff dispensers, anti-radiation missiles, antennas, IR missile warning systems, laser warning systems, interference mitigation systems, electromagnetic pulse weapons, sensors, ECCM, etc., produce a dense and hostile ecosystem and gigabytes of streaming real time data. The above image, and other images in this blog, give an idea of how complex things are.

According to a report at NavSea Warfare Center “The goal of using data fusion in multi-sensor environments is to obtain a lower detection error probability and a higher reliability by using data from multiple distributed sources.” But data fusion may not be enough. This short blog shows how using novel data processing techniques, like the QCM (Quantitative Complexity Management) it is possible to extract systemic and strategic information and new knowledge out of huge amounts of raw data.

One cannot but recognize an analogy with medicine, where extremely sophisticated diagnostic tools exist (MRI, CAT-Scan, PET) which produce stunning images but at the end of the day it is up to the specialist to come up with a strategy. So how can one synthesize strategies automatically from tonnes of raw sensor data in the context of Electronic Warfare? Fancy data visualization and representations is not enough. Better understanding of the kill chain can be achieved using novel data analysis which produce systemic information.

This is how we proceed. A hypothetical scenario and scheme are illustrated below. Thousands of platforms, sensors, emitters and targets produce huge volumes of data that hide millions of interdependencies, which change over time, often very quickly.

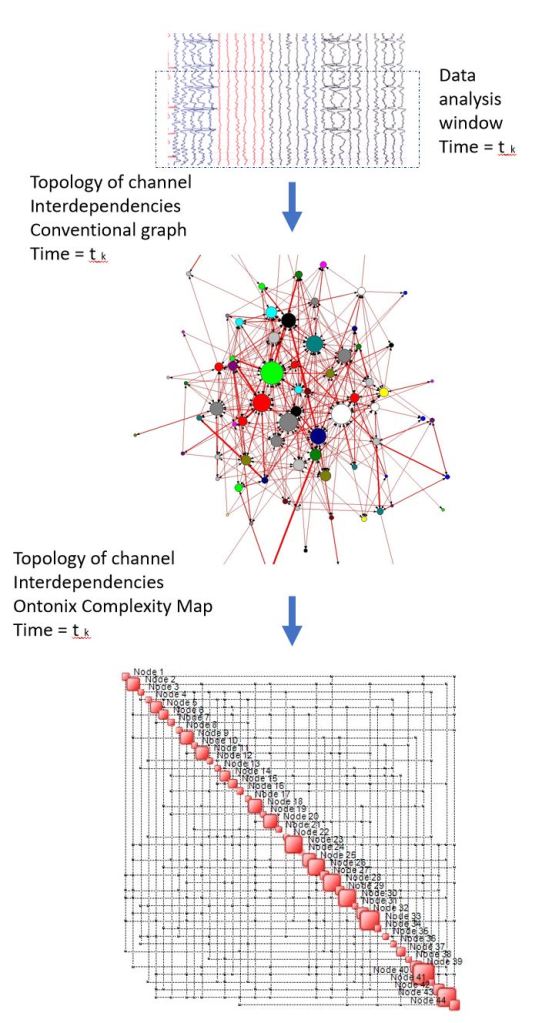

This data is treated using our QCM engine OntoNet using a moving window technique as illustrated below. OntoNet determines the instantaneous topology of the interdependencies between all data channels. As of today, the largest case we have processed had just over 300 000 data channels. An example of the said topology is shown below. Two representations are shown: the conventional one – a simple graph – as well as a Complexity Map introduced by Ontonix. This is done at each step and can be performed with an almost arbitrary frequency, provided there are sufficient computational resources.

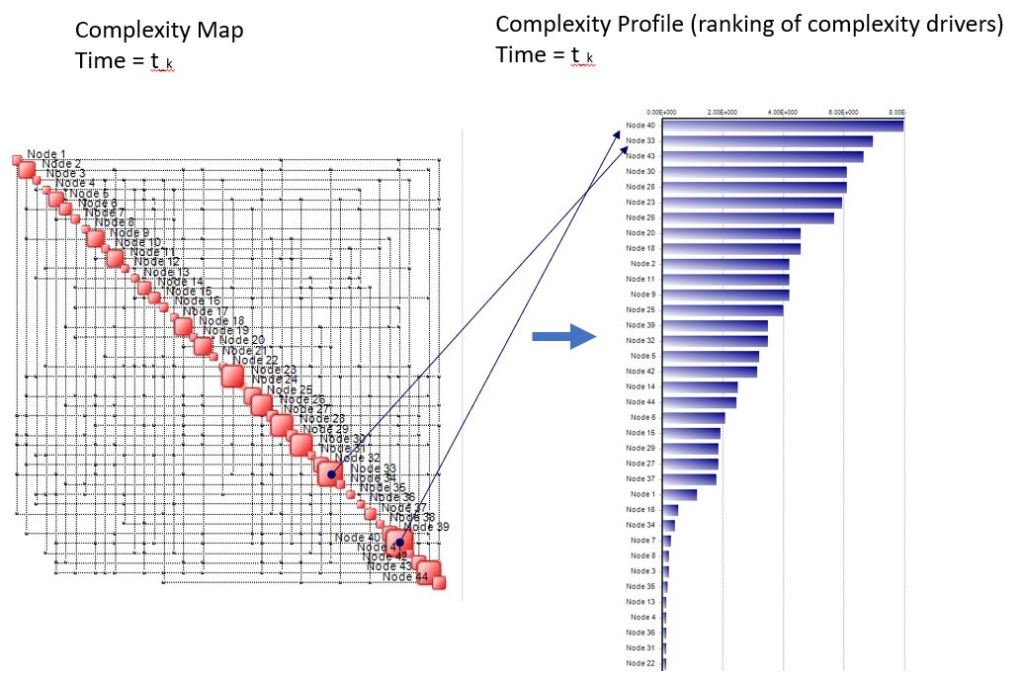

The map indicates where the action is, which data channels run the show so to speak – the large boxes on the map diagonal, referred to as complexity hubs. But a huge dense map is of little use, especially on the battlefield. The next step is extraction of the Complexity Profile – a ranking of complexity drivers, which is illustrated below. The action is always at the top of the chart.

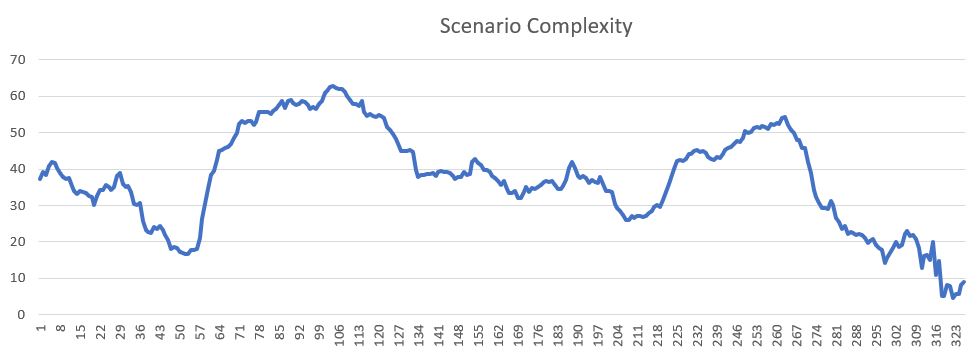

Obviously, both the Complexity Map and Complexity Profile change over time. Below is an example of a scenario complexity profile over time – basically each slice of the waterfall plot is a Complexity Profile:

To win a battle means to bring its complexity down or, at least, to be able to control it. Just like in the case of hospitalized ICU patients. High complexity patients are difficult to fathom, manage and to treat. Battle management is no different.

What is interesting is mainly this:

- Who drives complexity – complexity hubs are great targets for attack

Most Damage is Done Attacking High-Complexity Targets

Along the same line, high complexity assets are those that deserve the greatest effort and investment in terms of defense. A Complexity Profile provides information on how to distribute one’s attack budget.

- How complexity changes over time – best times to attack is at times of greatest complexity as this can potentially maximize damage on the enemy side

- Lookout for rapid increases in complexity – these tend to anticipate crises

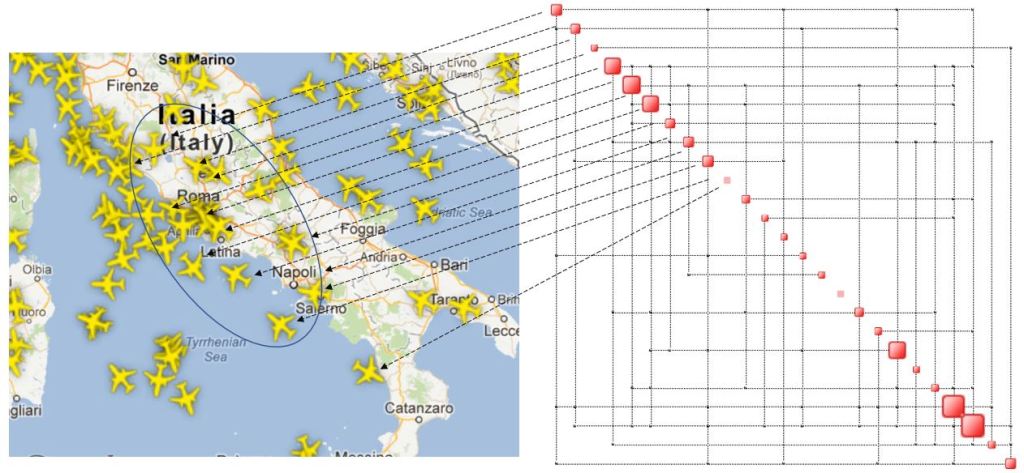

Examples of scenario complexity over time are shown below. These have been computed based on sensor data. The first example is based on radar output relative to positions of a number of aircraft in a given portion of airspace.

The chart below illustrates a complexity map of a portion of airspace based on aircraft positions. The flights corresponding to the large squares on the diagonal are those that necessitate more Air Traffic Control effort. A sudden airspace complexity increase suggests a coordinated attack.

The second example shows a sudden jump in complexity, indicating a rise of “activity” which suggests a potential attack. This strategic information, based on analysis of real time data from numerous sensors, is common to many types of systems.

Are we under attack?

In fact, we observe similar behavior in stock markets just before they crash and in ICU patients before they become unstable. A rapid increase in complexity is rarely a good omen.

However, data originating from sensors in a hostile environment can be corrupted because of cyberattacks. In addition, sensors can malfunction making it even more difficult to extract reliable and useful information. In similar circumstances, speaking of accuracy and precision is impossible. The Principle of Incompatibility states that:

High Precision is Incompatible with High Complexity

EW SW companies use words like “accuracy” and “precision” in conjunction with the word “complex” – this makes little sense when complexity becomes really high. The alternative is to give up precision for systemic, more coarse grain information which is strategic in nature and which, at the end of the day, can be more useful. Stating that a given “scenario is complex” without providing a measure of its complexity, is irrelevant.

Complexity profiling of an Electronic Warfare environment can be performed based on:

- threat/emitter/platform type

- sensor type

- position/sector

- frequency range

- etc.

The conclusion is that thanks to the QCM one can envisage automating response to threats. All it takes is streaming raw data and plenty of compute power. A Complexity Profile of an EW ecosystem can go a long way in the direction of suggesting a Low-Complexity response strategy. Remember, the goal is to reduce scenario complexity as it will be more manageable.

NB: QCM doesn’t use any conventional or linear techniques to measure complexity or derive a Complexity Profile. No linear correlations, no PCA, no cluster analysis, or regression analysis. Moreover, we don’t resort to Machine Learning as in rapidly changing, hostile and highly complex contexts there is no time to learn patterns in order to be able to recognize them later. The enemy will make sure of that. The QCM takes care of identifying anomalies the first and only time they show up.

The application described in this short article relies on a new next-gen version of the QCM, which we call QCM2.

0 comments on “Electronic Warfare and Complexity Management”