As compute power increases it is easy to generate FE meshes quickly and efficiently. This allows analysts to reduce the discretisation error. However, many people overlook the fact that models are already fruit of sometimes quite drastic simplifications of physical phenomena. For example, the equation of the Euler-Bernoulli beam – the simplest of structural elements – is result of at least the following assumptions:

- The material is homogeneous.

- The beam is slender.

- The constraints are perfect

- The material is linear and elastic.

- The effects of shear are neglected.

- Rotational inertia effects are neglected.

- The displacements are small.

- Sections remain plane.

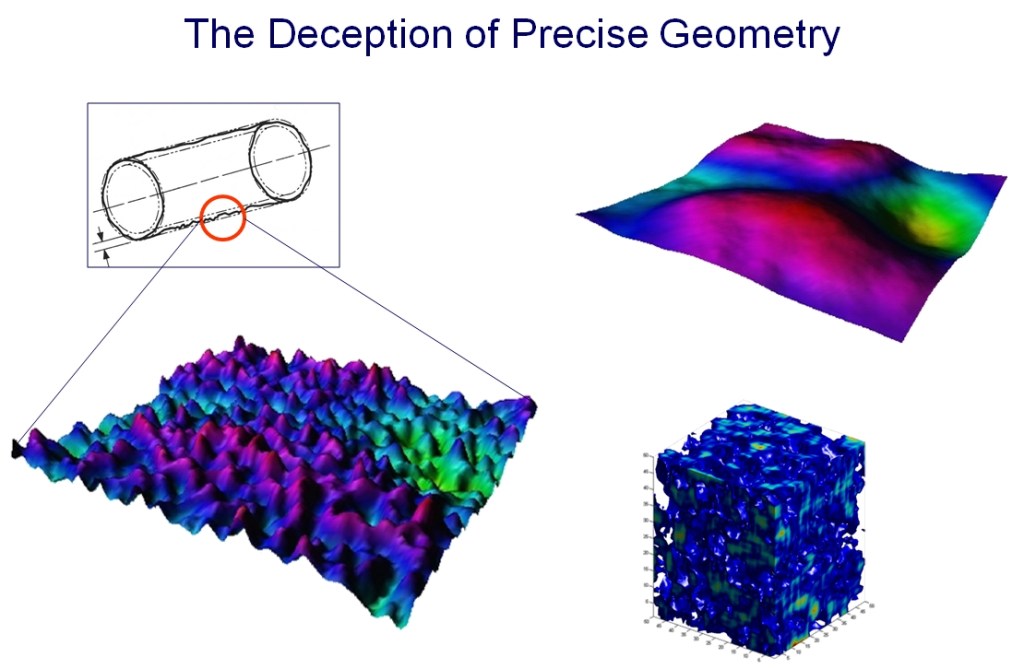

How much physics gets “lost” in the above process? 5%, 10%? Experience suggests that if it is 10% you’re lucky, if it is 5%, you’re a champion. Now, to put this equation to work, one needs to resort to discretisation techniques, such as finite differences of finite elements. This adds, say, another 5% error. Suppose now that you’re running a Design Of Experiments design exploration and you use a Response Surface method. That may add another few percent error – after all, Response Surfaces are mere surrogates of FE models – they are simpler, they cost less, but are less precise. Setting aside round-off and truncation errors, our computer model “misses” at least 15-20% of the physics behind the original phenomenon. If that were not enough, the inputs that drive models are subjected to scatter and uncertainty in material properties, loads, boundary conditions, etc.

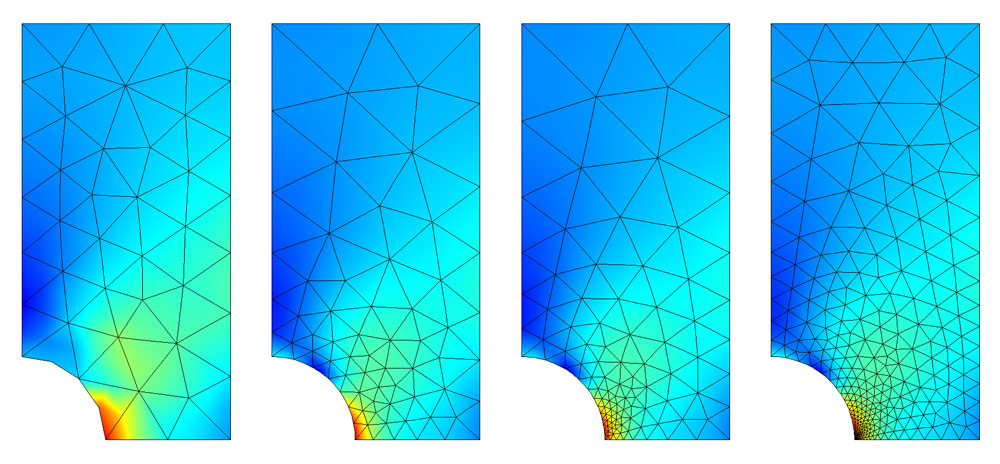

Modern finite element science has reached levels of maturity in which the precision of numerical simulation codes has greatly surpassed that of the data we feed into them. Mesh resolution, or bandwidth, is, quite often, seen as a measure of technological progress. But now ask yourself this simple question. Suppose you use an FE model to optimize the performance of a product. Suppose that with respect to the nominal design you reach an improvement of 10-20%, which, in many cases, is phenomenal. To what extent can you actually trust the result, knowing that the improvement is of the same magnitude as the modeling error? What is the sense in running very precisely (with a super-fine mesh) an approximate problem with uncertain inputs?

Experience suggests, once again, that there is no such thing as a precise model. Models should be realistic, not precise. Once this is realized, CAE science can go in a totally new direction – limiting the bandwidth of FE meshes to reasonable levels, and utilizing CPU resources in a more “horizontal” fashion, to design exploration, stochastic simulations, gaining new knowledge, not to generating more decimals. After all, the closer you look the less you see.

0 comments on “How Refined Should an FE Mesh be?”