Article from MSC’s Alpha Magazine, Vol 1, Winter 2003, written by Dr. J. Marczyk.

“Clouds are not spheres, mountains are not cones, coastlines are not circles, and bark is not smooth, nor does lightning travel in a straight line.”

In this somewhat prophetic statement by Benoit Mandelbrot, the discoverer of the fractal geometry of Nature, one may perceive the direction which computer-aided engineering (CAE), but also computing in general, will take in the new millennium. While the past decades have established the spectacular success of finite element science in engineering, we still have a long way to go. Fractals, chaos, the principles of uncertainty and of quantum mechanics eventually manifest themselves in the macro-scale to the engineer in the form of tolerances and scatter. Revisiting Mandelbrot’s sentence in a more engineering-oriented perspective, one may say that materials are not homogenous, boundary conditions are not ideal, geometry is not perfect and loads are subjected to unexpected fluctuations. In other words, uncertainty exists.

Uncertainty originates from the very heart of physics and is deeply rooted in the nature of matter and therefore, not surprisingly, it accounts for a huge chunk of the phenomena we observe. In effect, F=kx and F=ma are only a small part of the picture. In the past, uncertainty has been accommodated in engineering, and in finite element models in particular, via safety factors. This simple stratagem allowed the transformation of a stochastic problem into a deterministic one and, since safety margins were ultimately computed, at the same time induced a false sensation of infallibility and security.

However, as we know, Nature offers no free lunch, and the effects of uncertainty are only too well known. There is always something that has been overlooked or left un-modeled, some unfortunate and unanticipated combination of factors and circumstances that finally leads to catastrophic collapse, loss of life, or in the best of cases, an expensive lawsuit or product recall. After all, models are only models. We can’t expect a model to return more than has been hard-wired into it.

However, today, thanks to spectacular advances in computing technology, uncertainty can be taken into account in the very way it manifests itself in nature. The tremendous advantage of doing this hinges on a very simple but fundamental point – models incorporating uncertainty become extremely realistic. So, what is this new direction in which CAE will undoubtedly evolve? Simulating reality. This white paper describes how the deterministic vision of CAE that has pervaded engineering in the 20th century is gradually giving way to a more natural paradigm – the understanding and management of uncertainty through stochastic simulation. It is virtual product development – for real.

Before we take a closer look at MSC.Robust Design, MSC.Software’s stochastic simulation tool, let us briefly review the rationale behind the management of uncertainty – the foundation of a “new CAE.” The basic principle on which this rationale hinges is the Principle of Incompatibility, coined by L. Zadeh. Essentially, the principle states that high complexity is incompatible with high precision. In other words, the more interacting components a system contains, each of which will of course be built to certain engineering tolerances, the less precise statements we will be able to make about the system’s behavior. No matter how precisely we know the inputs to the system, the outputs will never be determined with arbitrary precision.

This is due to the interplay of complexity and uncertainty. It is a fact of life. There is a point beyond which adding precision to the model ceases

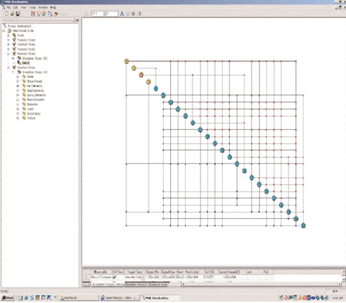

The MSC.Robust Design GUI has been built according to modern standards and is extremely user-friendly and intuitive. Thousands of stochastic variables can be introduced into a large finite element model in a matter of minutes.

to provide more knowledge. It is no longer a matter of adding more decimals, or more finite elements. It is simply a matter of the physics behind uncertainty that has been tacitly “forgotten” in 20th century CAE.

But why were engineers forced to build deterministic models in the first place? Essentially because of limited computing power at their disposal.

Assumptions such as those of linearity, homogeneity, small displacements, small deformations, and of course determinism, were simply inevitable. Evidently, the more such assumptions are stacked up, the more physics the model misses and, therefore, the less credible it is. But today the situation has changed radically. Thanks to advances in high-performance computing, we can now make fewer assumptions and yet get closer to reality.We can transition from emulation to simulation. We are now realizing that models must first of all be realistic, not precise. Precision is not a characteristic of the universe, and the underlying physics that govern the universe are based on uncertainty principles. The inclusion of elements of uncertainty in computer models boosts the realism of these models to unexpected and unimagined levels. We have finally understood that the name of the game is not the pursuit of perfection. All arts are imitation of Nature. Why should CAE be an exception?

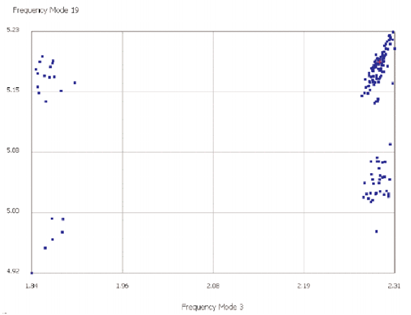

Stochastic simulation with a finite element model starts by specifying tolerances and scatter on all input variables used in the model. This process is known as model randomization, and targets, for example, thickness, material properties, spring stiffness, beam cross-sections, forces, imposed displacements, etc. Each tolerance is defined by certain engineering limits and a particular distribution function. At this stage, the engineer must select outputs, or observables, such as stresses, frequencies, displacements, etc. The model is then executed a certain number of times (50- 100 is typical), randomly changing each of the input variables within assigned tolerances. This process, based on Monte Carlo techniques, is referred to as stochastic simulation. The result is referred to as a meta-model, in which 2D or 3D plots appear as a constellation or cloud of points, and which provides enormous amounts of information. The whole, as usual, is greater than the sum of the parts.

The engineer can now quickly see that the simple process of randomization – which is fully automated within MSC.Robust Design – has very important consequences. The more tolerances introduced into a model, the more realistic it becomes. If a model has thousands of variables, then all should be randomized, not just the ones that we think are decisive and influential. This approach overcomes the limitations inherent in any surrogate technique which aims at replacing the finite element code with a simpler model.

The difference between a surrogate and the finite element model is simply phenomenal. That’s why MSC.Robust Design has been architectured to quickly enable the introduction of thousands of stochastic variables into FE models. The objective is to help the engineer very quickly generate models that look and feel like the real thing. But why do we insist on randomizing everything in an FE model, when we really do know that good designs ultimately are driven by a small number of variables or features?

First of all, we cannot possibly know beforehand which are the important variables. Secondly, the more complex a product is, the higher the chance that some unimagined combination of uncertainties will have an unexpected and potentially undesired effect. This phenomenon, which can only be observed if the FE model incorporates many variables with tolerances, manifests itself in the form of outliers, or anomalous behavior, which in many cases can be translated directly into risk and liability. It is therefore of paramount importance to anticipate their presence and to understand the circumstances under which they arise. This is only possible if we don’t bias the model. The model must have the potential to actually produce these pathologies – everything in the model must have a tolerance because this reflects reality. Not 30-40 variables, but thousands. Only in this way can we make credible claims about a model’s robustness.

Example of a complex meta-model, where four distinct clusters are visible. Each point cor- responds to an execution of a normal modes analysis (SOL 103). The axes report the nat- ural frequencies of two particular modes of vibration.

What is, ultimately, the result of a stochastic simulation? The primary result, in terms of significance, is the most likely behavior of our system. This is critical knowledge for the engineer, since the most likely performance doesn’t necessarily coincide with the nominal one. It is one thing to tune the nominal performance, but the most probable performance that the system will naturally develop is another. Secondly, the scatter or dispersion of performance is obtained. This may be readily related to manufacturing or assembly quality.

An interesting application of stochastic simulation is possible if one replaces the real physical tolerances with uniform distributions applied over relatively wide ranges around their respective nominal values. The result is what we call a “model health check.” Though of no statistical significance, a model health check is probably even more important than a proper stochastic simulation. In essence, the exercise enables us to quickly detect anomalies or unexpected behavior in the model, and helps focus attention where it is needed. Very often, meta-models with strange-looking shapes signal that something is potentially wrong.

MSC.Robust Design offers a powerful technique, Stochastic Design Improvement (SDI), to design systems to user-specified performance. In practice, the desired target performance is defined in the problem’s output space and MSC.Robust Design changes the design variables within specified engineering limits so that this performance is achieved.

The cost of this operation, which may be performed in the presence of tolerances on thousands of variables, is independent of the number of design variables. Typically, few tens of solver calls are sufficient to reach the desired performance improvements. SDI has very profound significance from a practical point of view. It doesn’t yield optimum performance. Instead, it yields numerous solutions that are acceptable or satisfactory. It allows for compromise. In engineering, it is more important to quickly find an acceptable solution rather than spend time on lengthy pursuits of perfection.

The highlights of MSC.Robust Design are Decision Maps – an original, intuitive, and extremely inviting manner of displaying the results of tens of solver executions all on one page. Decision maps display the topology of information flow within a system. At a glance, one immediately sees what is important and what is not, what drives performance, how variables relate to each other, global and local correlation levels, the potential to modify a design and design rules. In other words, structured information.

But what is structured information? It is knowledge. Finally, CAE becomes a source of knowledge, not just information. Knowledge materializes in the ability to relate and structure rules and conclusions, to piece together information. This is precisely what decision maps achieve. Beyond the concept of sensitivity, decision maps provide a collective, global and holistic measure of system behavior. Many factors con- verge and lead to multiple, often uncontrollable consequences. In life, after all, things are never as simple as ‘one cause – one effect.’

Realistic finite element models represent a valuable asset in a company – an investment that captures corporate know-how and experience. Stochastic simulation provides an opportunity to not only consolidate this knowledge, but also to extract additional, often unexpected information about a sys- tem and its performance. Meta-models enable engineers to discover and learn about their products in ways that would otherwise require years of experience and testing. MSC.Robust Design can help any company transform its deterministic finite element models into their stochastic equivalents, elevating traditional and consolidated technology to a higher level and, at the same time, creating an immense amount of new knowledge. In doing so, the engineering community will help to transition CAE from a cost center to a value center.

It is remarkable how the introduction of even minute tolerances into a finite element model can trigger unexpected behavior, often of macroscopic proportions. These are known as ‘butterfly effects’ and are more common than one might imagine. But the most valued result of stochastic simulation is more realistic, healthier, and therefore more credible models. With these models, one can effectively employ simulation as a means of limiting liability and risk, which ultimately leads to more robust designs.

MSC.Robust Design helps leverage and consolidate the level of trust in finite element models. It helps leverage years of investment in FE and CAE. It does so by employing the simplest and most versatile numerical technique, Monte Carlo simulation. Monte Carlo simulation constitutes the rational and philosophical foundation of synthetic knowledge generation because the traditional and prob- ably the best way of generating knowledge is based on empirism, i.e. via experimentation. Monte Carlo simulation is, after all, experimentation by computing.

A Decision Map illustrating links (correlations) between the various variables of a system. The different colors correspond to classes of inputs and outputs.

0 comments on “Stochastic Simulation: New Challenges and Perspectives for CAE in the 21st Century”