The QCM technology has been under development by Ontonix since 2005. Numerous applications have been developed which span various sectors of the industry, economics, finance and medicine.

In the majority of cases, QCM solutions are used for two main purposes:

- Early-warnings/pre-alarms, identification of pre-crisis situations, anomalies, attacks

- Complexity reduction

The objective is:

- Reduction of fragility (exposure) which is consequence of excessive complexity

- Increase of efficiency

- Enhancement of governability/controllability

A certain number of applications are already in real-time or are being conceived as real-time. Projects in Air Traffic Management (processing of radar output), monitoring of car electronics, fault identification in processing plants, military equipment, monitoring of IT infrastructures in banks or monitoring of patients in intensive care, are examples of how QCM technology may be used to identify pre-crisis conditions and issue appropriate early-warnings.

Very-large scale analyses conducted with supercomputer centre CINECA have illustrated how today QCM-based technology may be applied to the monitoring and analysis of systems with up to one million variables (channels).

QCM technology finds a natural application in the monitoring of network-based infrastructures which are critical to the functioning of a corporation or a state, such as power distribution grids, telecommunications, railways, motorways, water and medicine distribution, financial services, etc. These networks function in parallel and constitute an immense and highly complex system of systems. Very high complexity of these assets is their key characteristic. It is also true that high complexity is not only a source of fragility it also reduces efficiency as well as governability. In order to ensure the resilience and controllability of these systems, it is proposed to monitor the complexity of the said system of systems at different levels of granularity:

- Local monitoring – fine-grain monitoring of a given set of hubs known to be of vital importance

- Global monitoring – coarse-grain monitoring of the entire system of networks

In the second case, global and holistic-type of information is obtained, providing unique insight into the structural resilience (fragility) governability and dynamics of the entire system of systems. The importance of similar information cannot be overstated.

Monitoring of network complexity and resilience may be performed based on data traffic volumes, rates, delays, response times, uptime, or any other characteristics which are available at various nodes of the network in equipment such as routers.

It is proposed to incorporate the QCM engine into conventional routers, in order to provide local network anomaly and attack early warnings

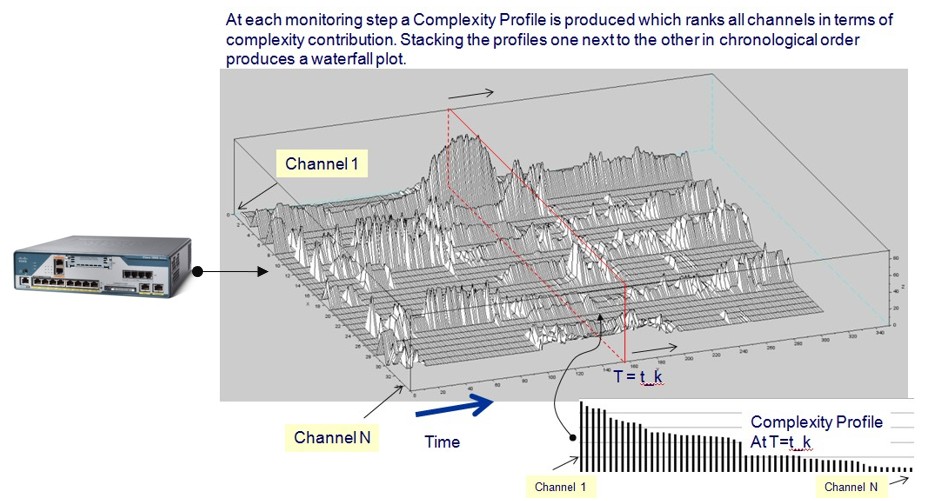

The concept is illustrated in the figure below, where N channels of network-specific data are processed to produce the so-called Complexity Profile: a ranked list of contributions of each channel to network complexity and resilience.

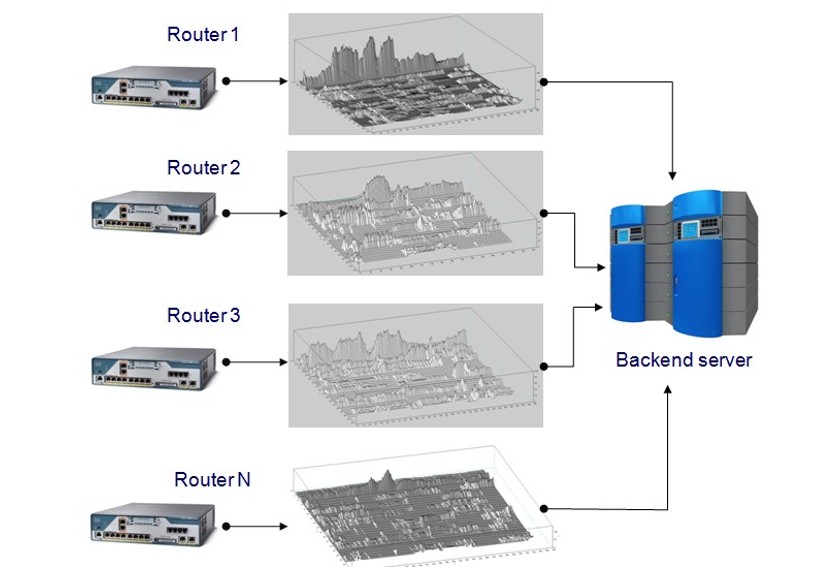

A Complexity Profile in terms of data volume requires orders of magnitude less than the raw traffic data needed to compute it. In a Global Monitoring context, only complexity profiles from each router in a network need to be transmitted to a backend server for analysis. This is illustrated in the following figure.

Transmitting complexity profiles to a backend server reduces dramatically the data volume which needs to be sent in order to perform analysis of the entire network (Global Monitoring).

The incorporation of the QCM engine into a device such as a router will produce a Complexity and Resilience Monitoring Device. Such a device will utilize data which is already available within the original device (e.g. router) and which will be buffered into an array, constituting the input to OntoNet™.

The type of information that may be obtained from the deployment of such a device in large network is instrumental towards:

- Providing early-warnings in case of increased network fragility and anomalies.

- Providing indications as to where to intervene in order to make the network more resilient and less complex.

Anomaly detection is the identification of rare items, events, patterns or observations which raise alerts by differing significantly from most of the data. The idea behind anomaly detection is to identify, or anticipate, cyberattacks and malfunctions. Machine learning could be used to detect anomalies very efficiently, as there are different algorithms that can address the topic. This is accomplished by presenting the learning algorithm with tens, hundreds or even thousands of examples of anomalies. And herein lies the problem.

In systems such as large networks or critical infrastructures, the high complexity may hide anomalies which can remain unknown or dormant for extended periods of time. Consequently, training an algorithm to recognize them is impossible. In addition, highly complex systems often comprise thousands or hundreds of thousands of data channels. In a similar context, defining and describing an anomaly may be very difficult and producing a significant set of learning vectors simply not feasible.

Read full article by CISCO:

0 comments on “Fine-grain Anomaly Detection in Networks.”