Entropy happens to all things physical which are larger than an atom. This means they run down, decay, and eventually cease to exist in their original form. This unavoidable demise has led us to the greatest mystery of the Universe that we have ever encountered. With an overwhelming tendency toward decay in the macroscopic world how does structure come to exist at all. The missing link in thermodynamics as taught in schools today seems to be a concise explanation of why order and structures abound in a universe purported to be driven by a Second Law that states that disorder increases, always and everywhere. A bridge between physics and biology is what is sought and it is sometimes called the Fourth Law of Thermodynamics – essentially an explanation as to why a biosphere exists.

Thermodynamic laws developed in the Nineteenth century by Clausius, Gibbs and Boltzmann made people believe that structure in the Universe should not exist. The second law and the concept of entropy made it clear that all systems have a tendency to decay into disorder and then remain static in equilibrium with their surroundings. Spontaneous order creation seemed impossible, despite the obvious evidence of stars, molecules and life. Since then, different explanations have been proposed. We have our own, which will be presented in an extremely simplified manner.

We introduce the following theorem:

We are unable to provide the proof as this cannot be done without disclosing proprietary information which lies at the core of the QCM algorithm. However, we can propose this simple interpretation of the meaning of the theorem.

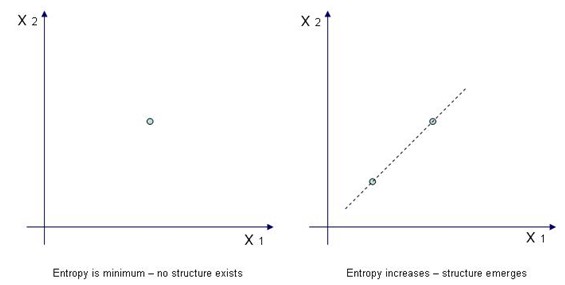

When entropy is very low – see the figure below – there is very little structure (left). As entropy (E) increases, it actually leads to structure (right), (S). Evidently, by ‘structure’ we mean an interdependency between two variables which is necessary in order to guarantee functionality.

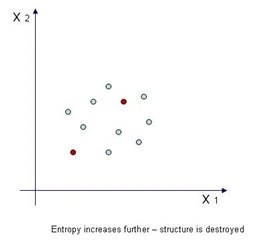

Now, as entropy increases – this is inevitable because of the Second Law of Thermodynamics – the same entropy that helped create structure now destroys it, see below. In fact, the two red dots in the above image – which sustained structure in the image above, are now surrounded by other dots (data points) which progressively soften it, until structure is completely dissolved.

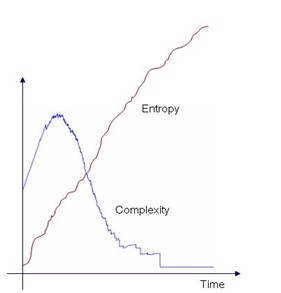

Consequently, as entropy increases, under certain circumstances it inevitably gives rise to structure, see plot below. Adding entropy to a system will progressively “soften” inter-dependencies between its variables until saturation and final removal. This is why complexity peaks while entropy can only increase.

Entropy, therefore, possesses a beautifully dual nature. While it is generally regarded as something destructive it actually can give rise to new structure. It gives birth only to kill later. The process is called aging.

As far as the Universe is concerned – it is the only known system to which the Second Law of Thermodynamics fully applies – we do not know where on the complexity curve it is today. In other words, we don’t know if it has reached its peak development (maximum amount of organized structure) or whether we are witnessing its demise. One thing seems to be true – there indeed was a Big Bang. There was a time when both entropy and structure were minimum.

So, complexity is the bridge which connects structure and entropy. Complexity brings together the two strongest and antagonistic forces of Nature – the urge to create structure and the compulsion to destroy it. C=f(S; E).

0 comments on “Complexity Theorems – Theorem 2.”