Our complexity metric, like all good metrics rooted in physics, has a lower and an upper bound. Critical complexity, as we know, is the maximum level of complexity any given system can sustain, albeit with a feeble structure that is about to collapse. However, there is also a theoretical limit as to how complex a system can really get.

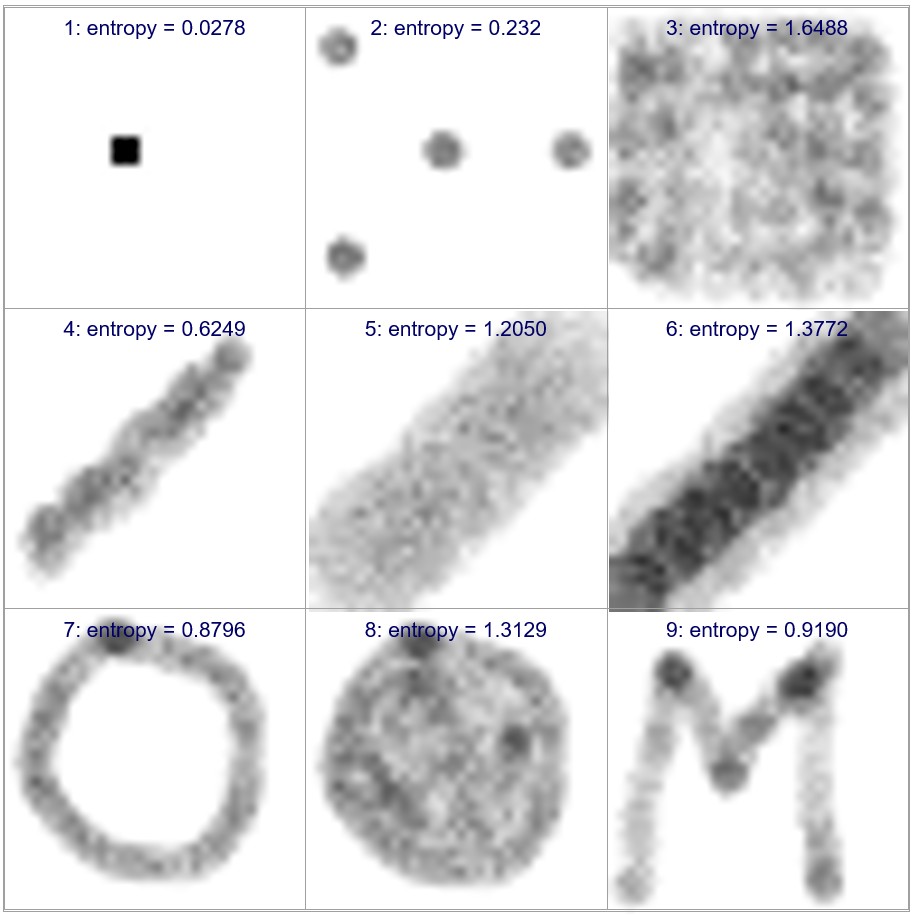

Below are indicated examples of different types of interdependencies between two variables. All images are in reality scatter plots with M data points. It is clear that a uniform distribution of all M samples leads to the highest entropy.

Evidently, the above value of maximum complexity can be significantly higher than critical complexity. In fact, when a system approaches critical complexity, the QCM algorithm starts to eliminate all insignificant interdependencies, not allowing them to reach a nearly uniform distribution.

However, the above result is still useful in that it illustrates how the maximum theoretical complexity is function the fourth power of the number of dimensions, while complexity grows with the power of three.

0 comments on “Complexity, Critical Complexity and Complexity Bounds”