There is an on-going debate on whether computers can help generate knowledge. As we know, the oldest form of generating knowledge was via experimentation. Ever since man has started to experiment, initially without any rigor, almost accidentally, and later, thanks to Galileo, in a more or less systematic manner, he has realized that knowledge is equivalent to experience. Experience, on the other hand, is a set of conclusions or rules drawn from repeated experiments. Experimentation by computer, that is simulation in its true sense, is instrumental towards knowledge generation. Monte Carlo Simulation (MCS) is the simplest, most universal and versatile way of doing experimentation via computing, and therefore, establishes a rigorous platform for the actual computation of knowledge. Experimentation has been forbidden for a very long time. Since Aristoteles has established his dogmatic approach to “science”, it was unnecessary to perform any experiments since any theory built on dogmas, that is upon absolute truth, doesn’t require verification. As this technical note advocates, experimentation by computer, that is simulation in its true Monte Carlo-sense, is instrumental towards knowledge generation. Strangely enough, although many people speak of doing computer simulation, they do not. In fact, from a statistical point of view, a single deterministic computer run constitutes an analysis, not a simulation. Similarly, a set of analyses constitutes a simulation. Monte Carlo analyses are, therefore, simulations. Along these lines, a single model, does not constitute a model in the true sense. Instead, a collection of deterministic models constitutes the model.

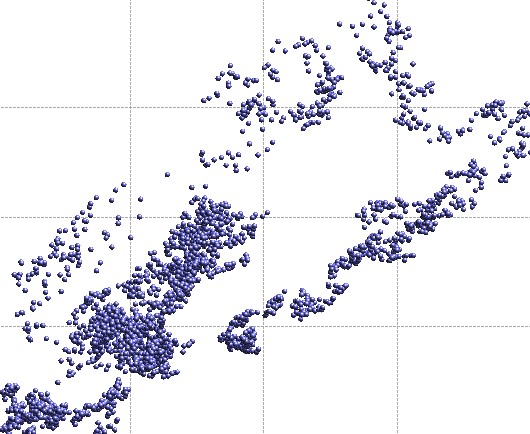

An example of what Monte Carlo Simulation delivers – a meta-model– is illustrated below. Each point in the constellation corresponds to a single deterministic computer run, essentially an analysis in the classical sense. The shape and topology of a given meta-model depends on the physics behind a particular problem. Meta-models can be extremely complex and intricate, and carry a tremendous amount of knowledge. It is therefore clear, how a single point has limited value, regardless of how much detail is invested into its computation. Evidently, the primary objective is to explain the reasons behind a meta-model’s shape, it’s local density fluctuations, formation of clusters, underlying structure, topology, existence of isolated points (outliers), etc. It is precisely these attributes of a meta-model than constitute the true knowledge of a given system, and which can be translated into concrete decisions. Ontonet by Ontonix has been conceived with this very purpose in mind – to extract the hidden knowledge in complex multi-dimensional meta-models.

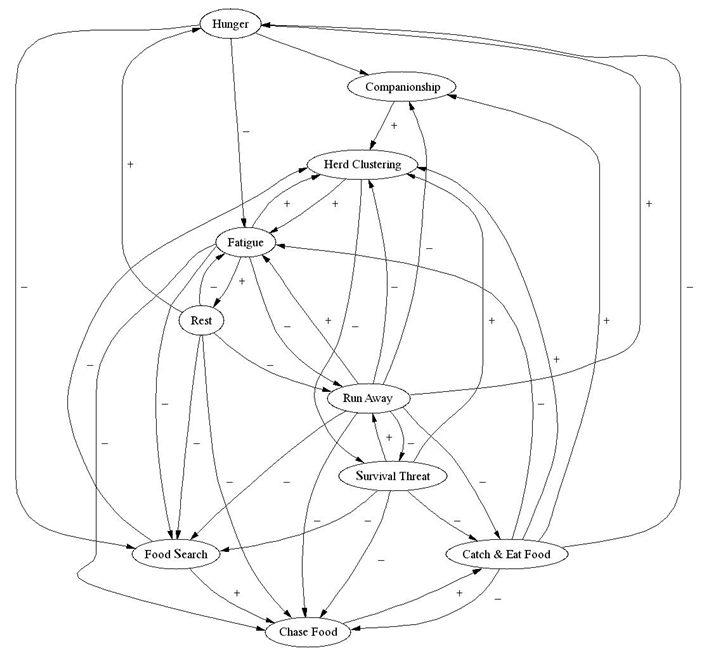

Many decision-making and problem-solving tasks are too complex to be understood quantitatively, however, on a qualitative basis it is possible to attack very complex problems by using knowledge that is imprecise rather than precise. Knowledge can be expressed in a more natural manner by using Fuzzy Cognitive Maps instead of tables of numbers or functions. The benefit of describing complex system via Cognitive Maps lies in capability to describe real-world problems, which inevitably entail some degree of imprecision and noise in the variables and parameters. Complex systems are usually composed of a number of dynamic agents, which are interrelated in intricate ways. Feedback propagates causal influences in complicated chains. Formulating a mathematical model may be difficult, costly, or even impossible. An example of a Fuzzy Cognitive Map of a dolphin is depicted below.

In a Fuzzy Cognitive Map, variable concepts or sub-components are represented via nodes. The graph’s edges are the casual influences between the concepts/components. The value of a node reflects the degree to which the concept is active in the system at a particular time. Cognitive Maps prove invaluable in engineering, as they enable one to comprehend the information flow within a system and to appreciate how components interact. Today, with the techniques developed at Ontonix, it is possible to automatically generate Fuzzy Cognitive Maps, instead of constructing them manually, based on subjective knowledge, as has been done in the past.

But what is knowledge? Ultimately, it is a dynamic and organized set of inter-related (fuzzy) rules. A Fuzzy Cognitive Map is a pictorial representation of these rules and of the inter-relationships that exist between them. It is clear, however, that cognitive maps are not static entities. As the values of certain nodes and links are changed, the topology of the map undergoes changes. Therefore, it is of great interest to find all the possible cognitive maps of a given system. This is precisely what OntoNet does – it finds all the states (cognitive maps) in which a system – or at least a model of a system – may find itself functioning. All that is required to accomplish this is a computer model of the system, a Monte Carlo simulation environment, which can be used to obtain the corresponding meta-model, and OntoNet, to determine the cognitive maps of the system. What is the computational cost of this? Taking the cost of a single deterministic job as 1, a Monte Carlo Simulation typically requires around 100 solver calls (to capture the basic features of the response). Certainly not a Grand Challenge for most disciplines.

Knowledge constitutes the most compact form of representing data and information. Contemporary analysis-based CAE is not structured for the knowledge revolution, in that it can only yield data. In order for CAE and HPC to become an important source of knowledge, it is important to move to simulation-based CAE. If knowledge generation is not seen as the main motivation of CAE, it will be difficult to evolve CAE beyond today’s pseudo-KBE based on table look-up. With OntoNet, we’ve found a new way of using Monte Carlo Simulation: computing ontology. A totally new way of putting computers to work

Ontology – branch of philosophy dealing with the ultimate nature of reality or being.

Reblogged this on Calculus of Decay .

LikeLike