The approach to measuring the complexity of a process or system adopted by Ontonix does not rely on models or any modelling techniques: it is a model-free approach. What does this mean? When statisticians or analysts utilize data to build a model, they typically do the following:

- Clean up the data

- Find a model which fits the data well

- Measure the goodness of fit of the model wrt the original data

- Use the model instead of the original data to make decisions

This process presents two fundamental issues:

- Cleaning or filtering (e.g. of field data) often destroys precious information. Data is expensive.

- A model induces additional uncertainty (risk) to an already risky situation. This additional mode-induced risk is very rarely measured. The additional risk stems from the fact that different model can pass through the same data and provide very similar results.

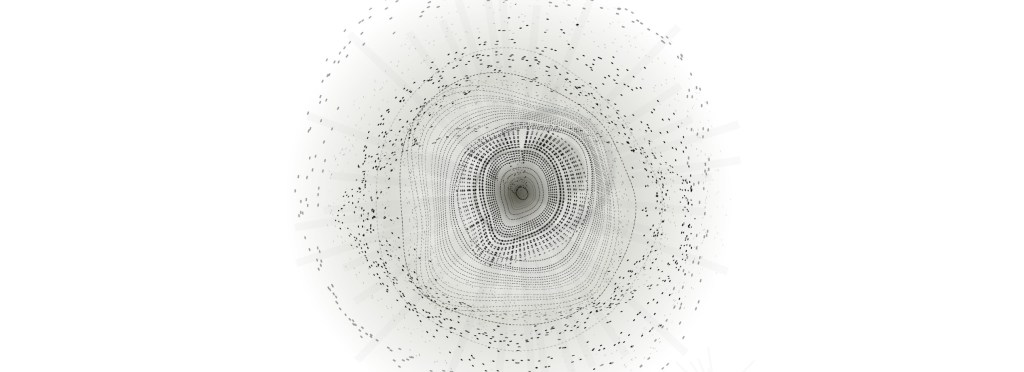

Ontonix has developed an original model-free technology for data processing which we call Visual Analytics in that it uses image processing techniques to infer dependencies between parameters. The method has the great advantage of conserving the original information contained in the data and overcomes the classical problems with statistics and modelling.

Conventional modelling presents also other problems which must be taken into account when approaching a large industrial-scale problem:

- Models may be built in an infinity of ways. There is no single best way to build a model.

- No two individuals will adopt and agree on the same model.

- The more complex a model, the more assumptions and hypotheses need to be made in order to build it.

- Unwrapping – a model can only give back in return what has been hard-wired into it.

- Models are rarely verified/validated beyond the domain for which they have been intended but are often used outside of those domains.

- The more complex a model the more fragile it is. More things can wrong in a more complex model and remain unnoticed for a long time.

- Conventional modelling technology is not adequate when it comes to accounting for hundreds of thousands of parameters. Models must be maintained and updated (re-calibrated) periodically. This can be expensive.

- Huge and complex models are extremely difficult to validate.

- Building large and complex multi-disciplinary models requires a very high level of preparation and mathematical skill, not to mention specific knowledge of numerous disciplines.

- The most important things in a model are those it doesn’t contain.

The alternative, therefore, is not to engage in lengthy and expensive model development programs but to resort to model-free approaches, which not only leverage the huge amounts of data that are available but which naturally by-pass the problems inherent with model-building.

The model-free approach developed by Ontonix does, however, deliver the classical information one would obtain using a conventional model:

- What are the main drivers, parameter ranking.

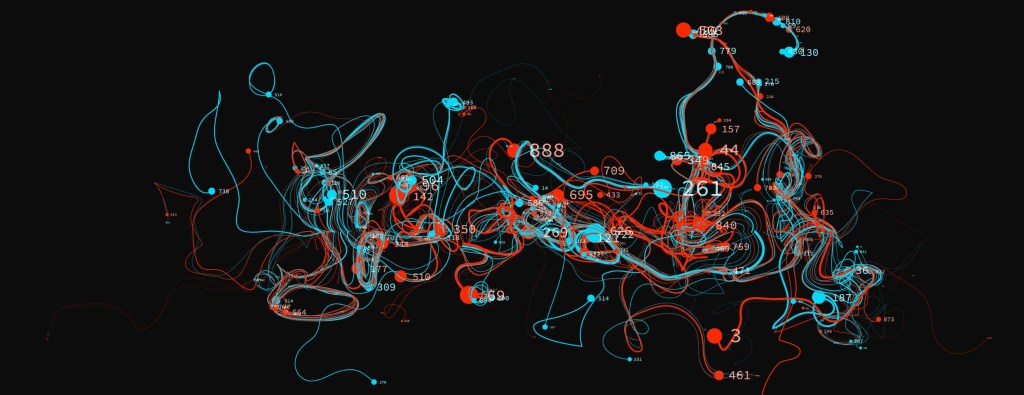

- What are the interdependencies between the various parameters (Complexity Maps).

- What if analyses (e.g. what happens to my revenue next quarter if the price of oil goes up?).

- Extrapolations, forecasts.

- Better understanding of a phenomenon or process.

A model-free data-centric approach automatically takes care of any changes in the manufacturing or production process as no model needs to be updated or re-calibrated. It is all in the data. Today all corporations collect data and in the future businesses will depend on data more and more. Therefore, it is necessary to develop a corporate culture in which data collection, storage and processing become integral part of the business and its processes. Quantitative Complexity Management is a rational platform on which to build such a culture.

When envisaging to deploy a company-wide Quantitative Complexity Management (or simply monitoring) programme, the following recommendations should be kept in mind:

- Monitoring/Management of complexity must be transversal, embracing more aspects of a company, i.e. manufacturing/production, sales, marketing, financials, raw materials, macro-economic aspects.

- ERP of Data Warehouses should be used in order to provide a reliable source of quality data.

- See complexity management as a new, modern means of doing strategic and holistic risk management. This allows to integrate risk management and corporate strategy into one – very few companies do this today.

- It is necessary to develop a data-centric culture in the company – it’s all in the data. Employees must be aware of the importance of collecting and storing of reliable data.

- Avoid models and especially agent-based models – too much depends on assumptions. Models require modelling experts. Large models require many experts. Models must be maintained and tuned. When an expert leaves, he must be replaced and trained.

- Models themselves induce risk. Adopt model-free methods: exploit existing real (not simulated) data which is already available in corporate Data Warehouses. It contains huge amounts of information which a model will never be able to deliver.

- No special HW or special skills (Human Resources, i.e. highly skilled mathematicians, or physicists able to build models) are needed to implement/deploy QCM on an industrial scale. All that is required is:

- Individuals that know the manufacturing/production and maintenance procedures in a company. These already exist.

- Data. This is available in an ERP/Data Warehouse system in any large corporation

- A QCM engine, such as OntoNet™, integrated into the corporate IT system. Integration is very fast.

However, the most important point to keep in mind is that:

Complexity is not a phenomenon of spontaneous aggregation and self-organization which takes systems to criticality (the so-called border on the edge of chaos). Such phenomena lie at the basis of almost every process in Nature and it is not clear why they are called “complexity”.

Complexity, according to our new vision, is a property of every system. There are no “complex systems”. There exist systems. Some have much complexity, some have less. Segregations into linear or non-linear, or complex and non-complex systems, will not contribute significantly to the development of science. Science needs generalizations not compartmentalization. The “conventional approach” to complexity has not been able to produce a single workable definition of complexity, not to mention a measure thereof. Speaking of complexity, complex systems and their properties without measuring complexity is like trying to lower a patient’s cholesterol without ever measuring it. Serious science starts when you begin to measure.

0 comments on “Complexity: To Model Or not To Model?”