1. Introduction

The trend to articulate product offering is putting pressure on manufacturing companies like never before. Therefore, the complexity of modern products and of the associated manufacturing processes is rapidly increasing. High complexity, as we know, is a prelude to vulnerability. It is a fact that in all spheres of social life excessive complexity leads to inherently fragile situations. Humans perceive this intuitively and try to stay away from highly complex situations. But can complexity be taken into account in the design and manufacturing of products? The answer is affirmative. Recently developed technology, which allows engineers to actually measure the complexity of a given design or product, makes it possible to use complexity as a design attribute. Therefore, a product may today be conceived and designed with complexity in mind from day one. Not only stresses, frequencies or fatigue life but also complexity can become a design target for engineers. Evidently, if CAE is to cope with the inevitable increase of product complexity, complexity must somehow enter the design-loop. As mentioned, today this is possible. Before going into details of how this may be done, let us first take a look at the underlying philosophy behind a “Complexity-Based CAE” paradigm. Strangely enough, the principles of this innovative approach to CAE have been established in the 14-th century by Francis William of Ockham when he announced his law of parsimony – “Entia non sunt multiplicanda praeter necessitatem” – which boils down to the more familiar “All other things being equal, the simplest solution is the best.” The key, of course, is measuring simplicity (or complexity). Today, we may phrase this fundamental principle in slightly different terms:

Complexity X Uncertainty = Fragility

This is a more elaborate version of Ockham’s principle (know as Ockham’s razor) which may be read as follows: The level of fragility of a given system is the product of the complexity of that system and of the uncertainty of the environment in which is operates. In other words, in an environment with a given level of uncertainty or “turbulence” (sea, atmosphere, stock market, etc.) a more complex system/product will result to be more fragile and therefore more vulnerable. Evidently, in the case of a system having a given level of complexity, if the uncertainty of its environment is increased, this too leads to an increase of fragility. We could articulate this simple concept further by stating that:

C_design X (U_manuf + U_env) = F

In the above equation we explicitly indicate that the imperfections inherent to the manufacturing and assembly process introduce uncertainty which may be added to that of the environment. What this means is simple: more audacious (highly complex) products require more stringent manufacturing tolerances in order to survive in an uncertain environment. Conversely, if one is willing to decrease the complexity of a product, then a less sophisticated and less expensive manufacturing process may be used if the same level of fragility is sought.

It goes without saying that concepts such as fragility and vulnerability are intimately related to robustness. High fragility = low robustness. In other words, for a given level of uncertainty in a certain operational environment, the robustness of a given systems or product is proportional to its complexity. As mentioned, excessive complexity is a source of risk, not only in business or in politics, but in engineering too. In other words, do it simply. Be pragmatic.

2. Complexity – a new frontier

Now that we understand why measuring complexity may open new and exciting possibilities in CAE and CAD let us take a closer look at what complexity is and how it can be incorporated in the engineering process by becoming a fundamental design attribute. In order to expose the nature of complexity, an important semantic clarification is due at this point: the difference between complex and complicated. A complicated system, such as mechanical wrist watch, is indeed formed of numerous components – in some cases as many as one thousand – which are linked to each other but, at the same time, the system is also deterministic in nature. It cannot behave in an uncertain manner. It is therefore easy to manage. It is very complicated but with extremely low complexity. Complexity, on the other hand, implies the capacity to deliver surprises. This is why humans intuitively don’t like to find themselves in highly complex situations. In fact, highly complex systems can behave in a myriad of ways (called modes) and have the nasty habit of spontaneously switching mode, for example from nominal to failure. If the complexity in question is high, not only the number of failure modes increases, the effort necessary to cause catastrophic failure decreases in proportion.

Highly complicated products do not necessarily have to be highly complex. It is also true that high complexity does not necessarily imply very many interconnected components. In fact, a system with very few components can be extremely difficult to understand and control

And this brings us to our definition of complexity. Complexity is a function of two fundamental components:

- Structure. This is reflected via the topology of the information flow between the components in a system. Typically, this is represented via a Process Map or a graph in which the components are the nodes (vertices) of the graph, connected via links.

- Entropy. This is a fundamental quantity which measures the amount of uncertainty of the interactions between the components of the system.

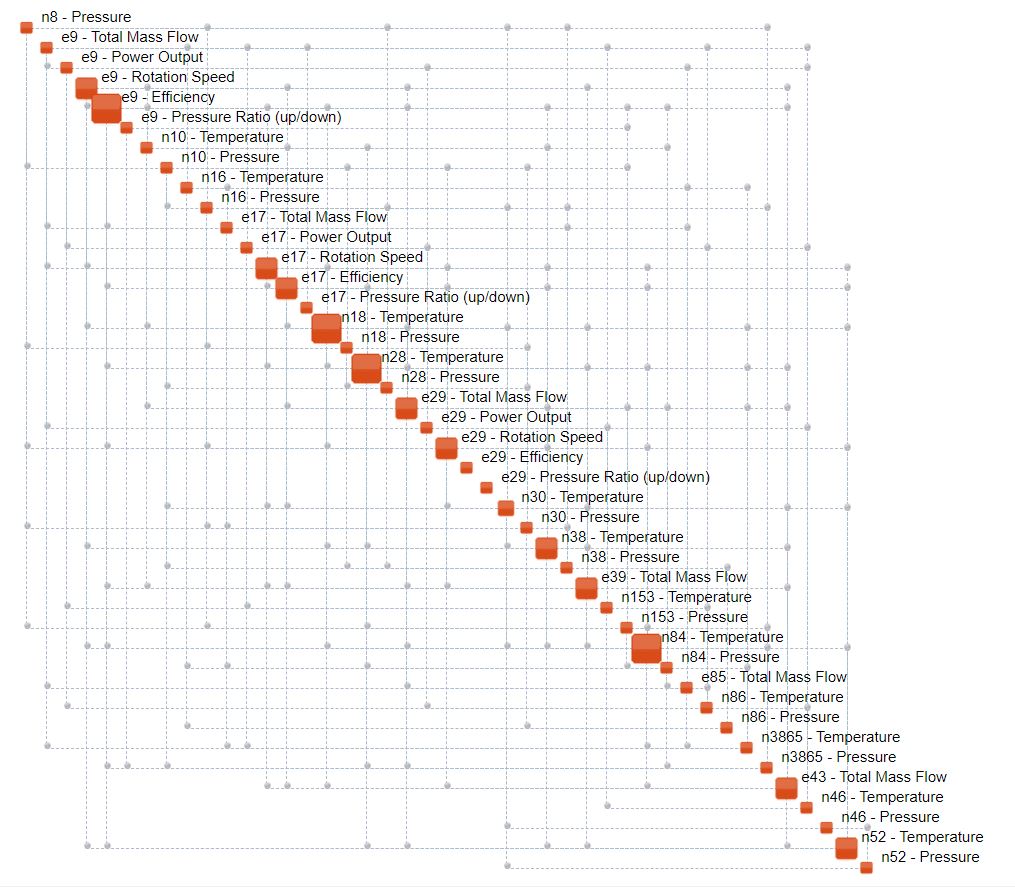

An example of a Process Map (Complexity Map) is shown in Figure 1.

Figure 1. Process Map of a CFD model of a power plant. Nodes are aligned along the diagonal of the map and significant relationships between them are indicated via blue connectors.

Obtaining a process map is simple. Two alternatives exist.

- Run a Monte Carlo Simulation with a numerical (e.g. FEM) model, producing a rectangular array in which the columns represent the variables (nodes of the map) and the rows correspond to different stochastic realizations of these variables.

- Collect sensor reading from a physical time-dependent system, building a similar rectangular array, in which the realizations of the variables are obtained by sampling the sensor channels at a specific frequency.

Once such arrays are available, they may be processed by OntoSpace™ which directly produces the maps. A Process Map, together with its topology, reflects the functionality of a given system. Functionality, in fact, is determined by the way the system transmits information from inputs to outputs and also between the various outputs. In a properly functioning system at steady-state, the corresponding Process Map is stable and does not change with time. Evidently, if the system in question is deliberately driven into other modes of functioning – for example from nominal to maintenance – the map will change accordingly.

A key concept is that of hub. Hubs are nodes in the map which possess the highest degree (number of connections to other nodes). Hubs may be regarded as critical variables in a given system since their loss causes massive topological damage to a Process Map and therefore loss of functionality. Loss of a hub means one is on the path to failure. In ecosystems, hubs of the food-chain are known as keystone species. Often, keystone species are innocent insects or even single-cell animals. Wipe it out and the whole ecosystem may collapse. Clearly, single-hub ecosystems are more vulnerable than multi-hub ones. However, no matter how many hubs a system has, it is fundamental to know them. The same concept applies to engineering of course. In a highly sophisticated system, very often even the experienced engineer who has designed it does not know all the hubs. One reason why this is the case is because CAE still lacks the so-called systems-thinking and models are built and analyzed in “stagnant compartments” in a single-discipline setting. It is only when a holistic approach is adopted, sacrificing details for breadth, that one can establish the hubs of a given system in a significant manner. In effect, the closer you look the less you see!

3. Using complexity to measure robustness

How can complexity be used to define and measure robustness? There exist many “definitions” of robustness. None of them is universally accepted. Most of these definitions talk of insensitivity to external disturbances. It is often claimed that low scatter in performance reflects high robustness and vice-versa. But scatter really reflects quality, not robustness. Besides, such “definitions” do not allow engineers to actually measure the overall robustness of a given design. Complexity, on the other hand, not only allows us to establish a new and holistic definition of robustness, but it also makes it possible to actually measure it, providing a single number which reflects “the global state of health” of the system in question. We define robustness as the ability of a system to maintain functionality. How do you measure this? In order to explain this new concept it is necessary to introduce the concept of critical complexity. Critical complexity is the maximum amount of complexity that any system is able to sustain before it starts to break down. Every system possesses such a limit. At critical complexity, systems become fragile and their corresponding Process Maps start to break-up. The critical complexity threshold is determined by OntoSpace™ together with the current value of complexity. The global robustness of a system may therefore be expressed as the distance that separates its current complexity from the corresponding critical complexity. In other words, R= (C_cr – C)/C_cr, where C is the system complexity while C_cr the critical complexity. With this definition in mind it now becomes clear while Ockham’s rule so strongly favours simpler solutions! A simpler solution is farther from its corresponding criticality threshold than a more complex one – it is intrinsically more robust.

The new complexity-based definition of robustness may also be called topological robustness as it quantifies the “resilience” of the system’s Process Map in the face of external and internal perturbations (noise). However, the Process map itself carries additional fundamental information that establishes additional mechanisms to assess robustness in a more profound way. It is obvious that a multi-hub system is more robust – the topology of its Process Map is more resilient, its functionality is more protected – than a system depending on a small number of hubs. A simple way to quantify this concept is to establish the degree of each node in the Process Map – this is done by simply counting the connections stemming from each node – and to plot them according to increasing order. This is known as the connectivity histogram. A spiky plot, known also as a Zipfian distribution, points to fragile systems, while a flatter one reflect a less vulnerable Process Map topology.

The density of a Process Map is also a significant parameter. Maps with very low density (below 5-10%) point to systems with very little redundancy, i.e. with very little fail-safe capability. Highly dense maps, on the other hand, reflect situations in which it will be very difficult to make modifications to the system’s performance, precisely because of the high connectivity. In such cases, introducing a change at one node will immediately impact other nodes. Such systems are “stiff” in that reaching acceptable compromises is generally very difficult and often the only alternative is re-design.

4. Complexity-based model validation.

Models are only models. Remember how may assumptions one must make to write a partial differential equation (PDE) describing the vibrations of a beam? The beam is long and slender, the constraints are perfect, the displacements are small, shear effects are neglected, rotational inertia is neglected, the material is homogenous, the material is elastic, sections remain plane, loads are applied far from constraints, etc., etc. How much physics has been lost in the process? 5%? 10%? But that’s not all. The PDE must be discretized using finite difference or finite element schemes. Again, the process implies an inevitable loss of physical content. If that were not enough, very often, because of high CPU-consumption, models are projected onto the so-called response surfaces. Needless to say, this too removes physics. At the end of the day we are left with a numerical artefact which, if one is lucky (and has plenty of grey hair) the model captures correctly 80-90% of the real thing. Many questions may arise at this point. For instance, one could ask how relevant is an optimization exercise which, exposing such numerical constructs to a plethora of algorithms, delivers an improvement of performance of, say, 5%. This and other similar questions bring us to a fundamental and probably most neglected aspect of digital simulation – that of model credibility and model validation.

The importance of a knowing how much one can trust a digital model is of paradigm importance:

- Models are supposed to be cheaper than the real thing – physical tests are expensive.

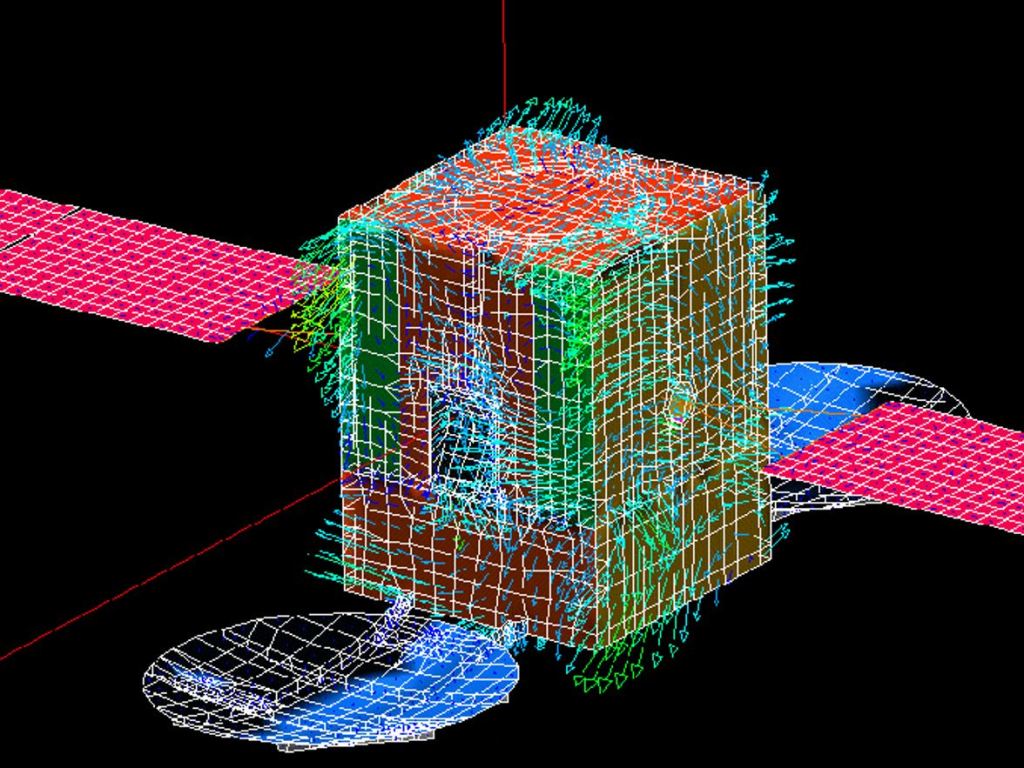

- Some things just cannot be tested (e.g. spacecraft in orbit).

- If a model is supposed to replace a physical test but one cannot quantify how credible the model is (80%, 90% or maybe 50%) how can any claims or decisions based on that model be taken seriously?

- You have a model with one million elements are you are seriously thinking considering mesh refinement in order to get “more precise answers” but you cannot quantify the level of trust of your model. How significant is the result of the mesh refinement?

- You use a computer model to deliver an optimal design but you don’t know level of trust of the model. It could very well be 70% or 60%. Or less. You then build the real thing. Are you sure it is really optimal?

But is it possible to actually measure the level of credibility of a computer model? The answer is affirmative. Based on complexity technology, a single physical test and a single simulation are sufficient to quantify the level of trust of a given computer model, providing the phenomenon in question is time-dependent. The process of measuring the quality of the model is simple:

- Run a test and collect results (outputs) in a set of points (sensors). Arrange them in a matrix.

- Run the computer simulation, extracting results in the same points and with the same frequency. Arrange them in a matrix.

- Measure the complexity of both data sets. You will obtain a Process Map and the associated complexity for each case, C_t and C_m (test and model, respectively).

The following scenarios are possible:

- The values of complexity for the two data sets are similar: your model is good and credible.

- The test results prove to be more complex than simulation results: your model misses physics or is based on wrong assumptions.

- The simulation results prove to be more complex than the physical test results: your model probably generates noise.

But clearly there is more. Complexity is equivalent to structured information. It is not just a number. If the complexities of the test and simulation results are equal (or very similar) one has satisfied only the necessary condition of model validity. A stronger sufficient condition requires in addition the following to hold:

- The topologies of the two Process Maps are identical.

- The hubs of the maps are the same.

- The densities of the maps (i.e. ratio of links to nodes) are the same.

- The entropy content in both cases is the same.

The measure of model credibility, or level of trust, may now be quantified as:

MC = abs[ (C_t – C_m)/C_t) ]

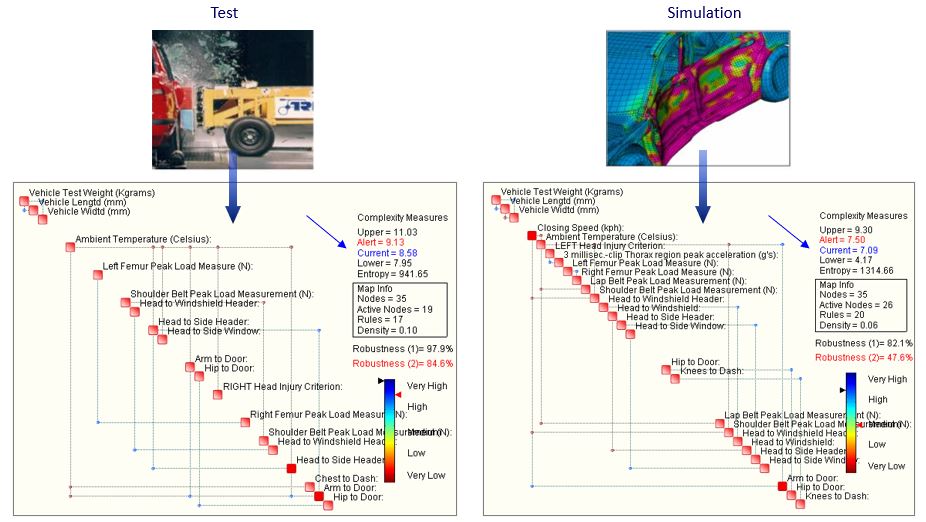

Figure 2 illustrates the Process Maps obtained from a crash test (left) and simulation (right). The simulation model has a complexity of 6.53, while the physical test 8.55. This leads to a difference of approximately 23%. In other words, we may conclude that according to the weak condition, the model captures approximately 77% of what the test has to offer. Moreover, the Process Maps are far from being similar. Evidently, the model still requires a substantial amount of work.

Figure 2. Process Maps obtained for a physical car crash-test (left) and for a simulation (right).

But clearly there is more, the same index may be used to “measure the difference” between two models in which:

- The FE meshes have different bandwidth (a fine and a coarse mesh are built for a given problem).

- One model is linear, the other is non-linear (one is not sure if a linear model is suitable for a given problem).

- One model is run on 1 CPU and then on 4 CPUs (it is known that with explicit models this often leads to different results).

5. Complexity-based CAD

It is evident to every engineer that a simpler solution to a given problem is almost always:

- Easier to design

- Easier to assemble/manufacture

- Easier to service/repair

- Intrinsically more robust

The idea behind complexity-based CAD is simple: design a system that is as simple as possible

but which fulfils functional requirements and constraints. Now that complexity may be measured in a rational manner, it can become a specific design objective and target and we may put the “Complexity X Uncertainty = Fragility” philosophy into practice. One way to proceed is as follows:

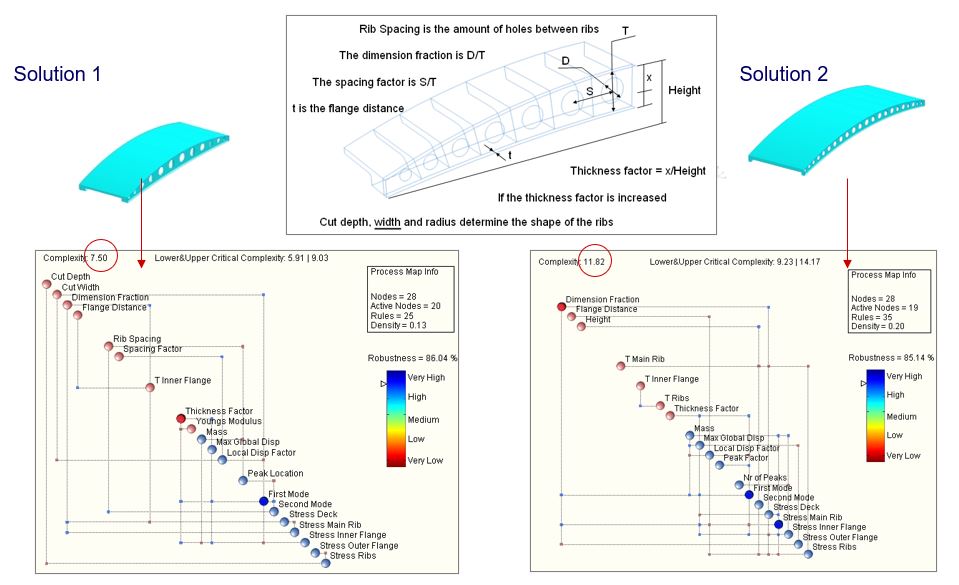

- Establish a nominal parametric model of a system (see example in Figure 3, illustrating a pedestrian bridge)

- Generate a family of topologically feasible solutions using Monte Carlo Simulation (MCS) to randomly perturb all the dimensions and features of the model.

- Generate a mesh for each Monte Carlo realization.

- Run an FE solver to obtain stresses and natural frequencies.

- Process the MCS with OntoSpace™.

- Define constraints (e.g. dimensions) and performance objectives (e.g. frequencies, mass).

- Obtain a set of solutions which satisfy both the constraints as well as the performance objectives.

- Obtain the complexity for each solution

- Select the solution with the lowest complexity.

Figure 3. parametric quarter-model of a pedestrian bridge.

The above process may be automated using a commercial CAD system with meshing capability, a multi-run environment which supports Monte Carlo simulation and an FE solver. In the case of our bridge example, Figure 4 illustrates two solutions, possessing very similar mass, natural frequencies, stresses and robustness but dramatically different values of complexity. The solution on the right has complexity of 8.5 while the one on the left 5.4.

Figure 4. Two solutions to the pedestrian bridge. Note the critical variables (hub) indicated in red (inputs) and blue (outputs).

6. Conclusions

Given that the complexity of man-made products, and the related manufacturing processes, is quickly growing, these products are becoming increasingly exposed to risk, given that high complexity inevitably leads to fragility. At the same time, the issues of risk and liability management are becoming crucial in today’s turbulent economy. But highly complex and sophisticated products are characterized by a huge number of possible failure modes and it is a practical impossibility to analyze them all. Therefore, the alternative is to design systems that are intrinsically robust, i.e. that possess built-in capacity to absorb both expected and unexpected random variations of operational conditions, without failing or compromising their function. Robustness is reflected in the fact that the system is no longer optimal, a property that is linked to a single and precisely defined operational condition, but results acceptable (fit for the function) in a wide range of conditions. In fact, contrary to popular belief, robustness and optimality are mutually exclusive. Complexity-based design, i.e. a design process in which complexity becomes a design objective, opens new avenues for the engineering. While optimal design leads to specialization, and, consequently, fragility outside of the portion of the design space in which the system is indeed optimal, complexity-based design yields intrinsically robust systems. The two paradigms may therefore be compared as follows:

- Old Paradigm: Maximize performance, while, for example, minimizing mass.

- New Paradigm: Reduce complexity accepting compromises in terms of performance.

A fundamental philosophical principle that sustains the new paradigm is L. Zadeh’s Principle of Incompatibility: High complexity is incompatible with high precision. The more something is complex, the less precise we can be about it. A few examples: the global economy, our society, climate, traffic in a large city, the human body, etc., etc. What this means is that you cannot build a precise (FE) model of a highly sophisticated system. And it makes little sense to insist – millions of finite elements will not squeeze precision from where there isn’t any. Nature places physiological limits to the amount of precision in all things. The implications are clear. Highly sophisticated and complex products and systems cannot be designed via optimization, precisely because they cannot be described with high precision. In fact, performance maximization (optimization) is an exercise of precision and this, as we have seen, is intrinsically limited by Nature. For this very reason, models must be realistic, not precise.

0 comments on “New Perspectives and Challenges for CAE in the 21-st Century”