Combinatorial optimization is the process of searching for maxima (or minima) of an objective function F whose domain is a discrete but large configuration space.

The space of possible solutions is typically too large to search exhaustively using pure brute force. In some cases, problems can be solved exactly using Branch and Bound techniques. However, in other cases no exact algorithms are feasible, and randomized search algorithms must be employed, such as:

- Random-restart hill-climbing

- Simulated annealing

- Genetic algorithms

- Tabu search

A large part of the field of Operations Research involves algorithms for solving combinatorial optimization problems.

Applications for combinatorial optimization include, but are not limited to:

- Logistics

- Supply chain optimization

- Developing the best airline network of spokes and destinations

- Deciding which taxis in a fleet to route to pick up fares

- Determining the optimal way to deliver packages

- Working out the best allocation of jobs to people

- Designing water distribution networks

- Earth Science problems (e.g. reservoir flow-rates)

Another example is that of portfolio design, whereby a set of stocks is selected from a large universe of stocks is extracted using a particular criterion and with a particular objective in mind. Low complexity portfolios are known to be highly diversified and are therefore of interest to investors.

The selection of low complexity portfolios, i.e. subsets of stocks belonging to a given basket, when approached from a classical optimization perspective is an extremely difficult problem to solve as it is a combinatorial problem. In fact, one way to define the problem is in combinatorial terms as follows:

Given a set of N securities, select a subset of M elements such that its complexity is minimum

In the case of the Dow Jones Industrial index, for example, N=30 and M=15 and the number of combinations to analyze is C(N,M) = 155.117.520. In the case of the NASDAQ 100, with N=100 and M=25 we obtain C(N,M) = 2.425 E+23! For large baskets containing, for example N=1000 securities, with a portfolio size of M=25 leads to C(N,M) = 4.764186253 E+49. Such cases are clearly beyond the reach of the most powerful supercomputers. Evidently, conventional optimization is an impractical approach and a different methodology must be devised.

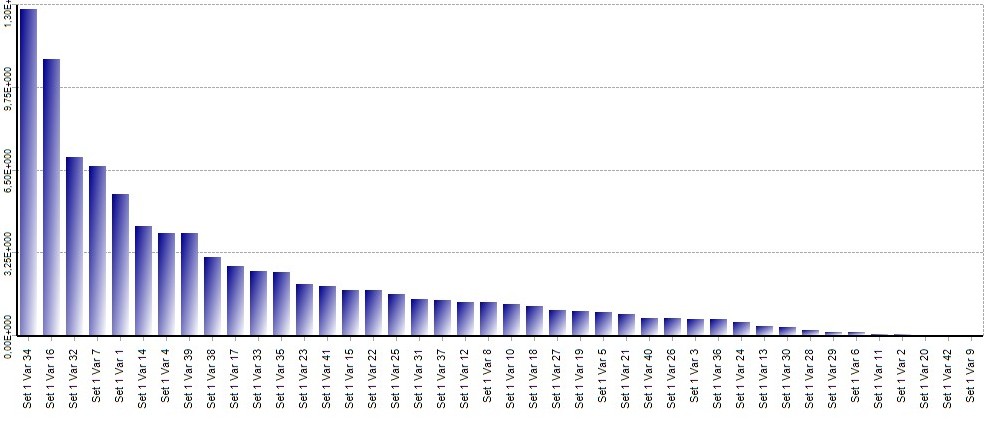

Such an alternative exists and is called Portfolio Complexity Profiling (PCP). Extracting Low-Complexity portfolios even from huge stock ensembles takes minutes on a PC and with clever programming may even run in a very high frequency context. A Complexity Profile is a standard output of a complexity analysis. An example of a Complexity Profile of a 42-dimensional data set is shown below.

Once the profile is available, it is easy to pick, for example a subset of 5 components such that they form a minimum complexity set. These are: 9, 42, 20, 2 and 11. The following is an example of a maximum complexity 4 element set: 34, 16, 32 and 7. What minimum complexity sets accomplish is the lowest degree of coupling between elements. The inverse may be said of high complexity sets. In the case of investment portfolios it is easy to grasp the significance of this.

The idea, at this point, is to identify classes of problems, or to transform classical problems in a way that they may be formulated in terms of minimizing (or maximizing) complexity. It would be of great interest to transform certain brachistochrone-type variational problems in this manner.

0 comments on “On Combinatorial Optimization and Complexity”